ולידציה – Operation Qualification – OQ- part 2

Protocol Preparation Overview

This article provide overviews of the main test procedures and verification, and their purposes. This OQ procedure is generic, and relevance of the test procedures and verifications provided below depends on the composition of the system under validation

:Note

As mentioned in the previous artcle on OQ Protocol Contents, the final contents of the OQ protocol are tailored according to the type and size of the system under validation. Since this document is generic, it covers test procedures that may not be necessary in small or simple systems

:Where relevant, the OQ procedure may consists of five main test procedures

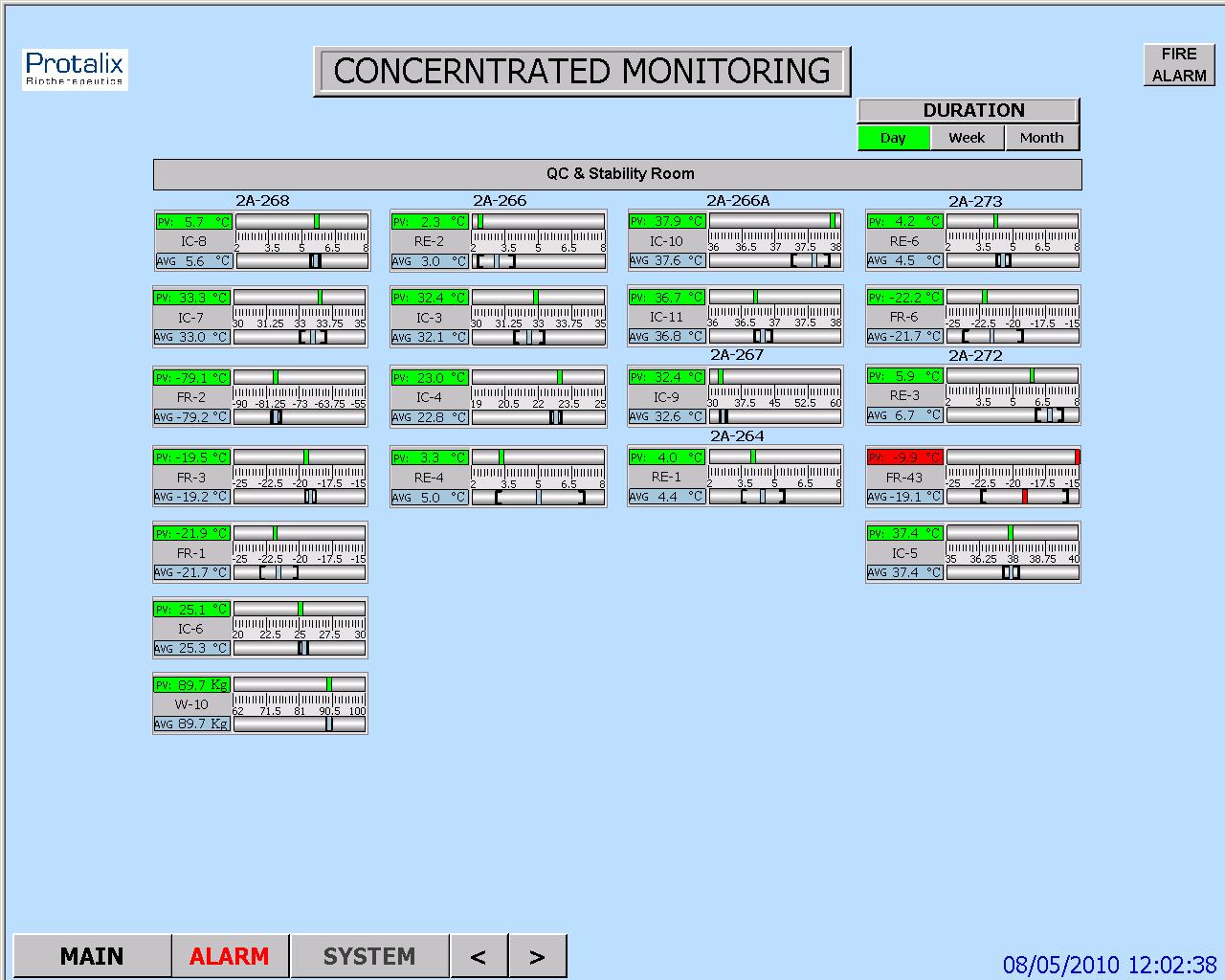

HMI Screen Test Procedure – intended to verify that the HMI screens provide the graphic design and functionality required for properly monitoring and controlling the environmental conditions in the user's

Parameters Lists Verification – intended to verify that the system parameters lists comply with the values specified in the SSO

: Two types of parameters are checked

Process parameters

Alarm parameters

System Operation Test Procedure – intended to verify that system monitoring and control components are capable of maintaining the user's facility within specified temperature, humidity and pressure levels

System Alarms Test Procedure – intended to verify that when the specified temperature, humidity and pressure levels exceed its specified limits, an alarm message is displayed, and an SMS or e-mail notification is sent to relevant personnel

Test of HMI Compliance with 21 CFR Part 11 – This test is intended to verify that the HMI meets Valtech Cardio's URS regarding 21 CFR Part 11. These : requirements are divided into 5 categories for the sake of clarity

Security

Electronic Records

Audit Trail

Archive

Backup

HMI Screen Test Procedure

This section includes specific test procedures for all the relevant HMI screens, where each test procedure serves to verify that the specific HMI screen provides the graphic design and functionality required for its intended purpose within the monitoring and controlling functions

Parameters Lists Verification

:This procedure covers the verifications of two types of parameters

Process parameters

Alarm parameters

Process Parameters List Verification

This procedure is intended to verify that:

The system enables to set the default values of the process set-points of each monitored environmental parameter.

The system provides automatically the corresponding low and high limits of these default values. These limit values are listed in below

The system rejects all the SP values that exceed their allowed ranges, as specified in the SSO

Alarm Parameters List Verification

:This procedure is intended to verify that

The system enables to set the default values of the alarm set-points of each monitored environmental parameter

The system provides automatically the corresponding low and high limits of these default values

The system rejects all the SP values that exceed their allowed ranges, as specified in the SSO

System Operation Test Procedure

This procedure is intended to verify that system monitoring and control components are capable of maintaining the user's facility within specified temperature, humidity and pressure levels. For this purpose, it is necessary to temporarily change set-points, in order to activate the control devices

System Alarms Test Procedures

This procedure is intended to verify that, when an environmental parameter value exceeds the specified normal range, the system reacts as specified in the SSO, by providing a specified alarm indication, a relevant e-mail alarm message, and relevant records in the Current Alarms and Historical Alarms screens

Test of HMI Compliance with 21 CFR Part 11

This test is intended to verify that the HMI meets the user's URS regarding 21 CFR Part 11. These requirements are divided into 5 categories for the sake of :clarity

Security

Electronic Records

Audit Trail

Archive

Backup